Introduction to Part 2

This post began as a limited review of what has become known as AI Art. In order to do that, I had to delve deeper into AI in general. Consequently, the post has grown significantly and is now in three parts. Part 1 looked at AI in general. This post, Part 2 will look at more specific issues raised by AI art. Part 3 will look at the topic from the perspective of an artist. Finally, Part 4 will be a gallery of AI-generated images.

This isn’t a consumer review of the various AI art packages available. There are too many, and my budget doesn’t run to subscriptions to enough of them to make such a review meaningful. My main focus is on commonly raised issues such as copyright, or the supposed lack of creativity. I have drawn only on one AI app. Imagine AI, for which I took out a subscription. I tried a few others, using the free versions, but these are usually almost shackled by ads or with only a limited set of capabilities.

Links to each post will be here, once the series is complete.

How do AI art generators work?

What they do, is take a string of text, and from that, generate pictures in various styles. How do they achieve that? The short answer is that I have no idea. So, I asked ChatGPT again! (Actually I asked several times, in different ways) I’ve edited the responses, so the text below is my own words, using ChatGPT as a researcher.

In essence there are several steps, each capable of inserting bias into the output.

Data Collection and Preprocessing

The AI art generator is trained on a large dataset of existing images. This can include paintings, drawings, photographs, and more. Generally, each image is paired with a text that in some way describes what the image is about. The data can be noisy and contain inconsistencies, so a certain amount of preprocessing is required. The content of the dataset is critical to the images that can be produced. If it only has people in it, the model won’t be able to generate images of cats or buildings. If the distribution of ethnicities is skewed, so will be the eventual output.

Selection of Model Architecture

The ‘model’ is essentially the software that interprets the data and generates the images. There are numerous models in use. The choice of model is critical, it determines the kind of images the AI art generator can produce. In practice, the model may have several components. A base model might be trained on a large database of images, while a fine-tuning model is used to direct the base model output towards a particular aesthetic.

Training

During training, the AI model learns to capture the underlying patterns and features of the images in the dataset of artworks. How this is done depends on the model in use. It seems, however, that they all depend on a process of comparing randomly generated images with the dataset and refining the generated image to bring it as close as possible to the original

Generating Art

After training, the AI can be used to generate new art. This is a significant task in its own right. The app’s AI model needs to understand the semantics of the text and extract relevant information about the visual elements mentioned. It then combines the information extracted from the text prompt with its own learned knowledge of various visual features, such as objects, colours, textures, and more. This fusion of textual and visual features guides the model in generating an image that corresponds to the given prompt.

Fine-Tuning and Iteration

There is a skill in writing the text prompts. The writer needs to understand how the text to image element of the app works in practice. In use, therefore, there is often a need for fine-tuning. Artists may adjust the prompt or other parameters to achieve the results they have in mind. Feedback from this process may also help in development and refinement of the model.

Style Transfer and Mixing

Some AI art generators allow for style transfer or mixing. The AI will generate a new image based on the content of a specific piece, but in the style of another.

Post-Processing

The generated image may then be subject to further post-processing to achieve specific effects or to edit out artefacts such as extra fingers.

Is it really intelligent?

Many of these apps describe themselves as AI ‘Art’ generators. That is, I think, a gross exaggeration of their capabilities. There is little ‘intelligence’ involved. The system does not know that a given picture shows a dog. It knows that a given image from the training data is associated with a word or phrase, say dog. It doesn’t know that dogs have four legs. Likewise, it doesn’t know anatomy at all. It probably knows perhaps that dog images tend to have the shapes we identify as legs, broadly speaking one at each corner, but doesn’t know why, or how they work, or even which way they should face, except as a pattern.

Importance of training data

Indeed, in the unlikely event of a corruption in the training data, such as identifying every image of a dog as a sheep, and vice versa, the AI would still function perfectly, but the sheep would be herding the dogs. If the dataset did not include any pictures of dogs, it could not generate a picture of a dog.

On top of that, if there is any scope for confusion in the text prompts, these programs will find it. To be fair, humans are not very good at understanding texts either, as five minutes on Twitter will demonstrate. Even so, I’m sure that art AI will get better, technically at least. It will even learn to count limbs and mouths.

Whatever we call it, we know real challenges are coming. Convincing ‘deep fake’ videos are already possible. I’m guessing that making them, involves some human intervention at the end to smooth out the anomalies. That will change, at which point the film studios will start to invest.

We are still a long way from General AI though. An art AI can’t yet be redeployed on medical research, even if some of the pattern matching components are similar.

Is AI art, theft?

These apps do not generate anything. They depend upon images created by third parties, which have been scraped from the web, with associated text. It is often claimed that this dependency is plagiarism or breach of copyright. There are several class-action lawsuits pending in the US, arguing just that.

Lawsuits

These claims include:

- Violation of sections of the Digital Millennium Copyright Act’s (DMCA) covering stripping images of copyright-related information

- Direct copyright infringement by training the AI on the scraped images, and reproducing and distributing derivative works of those images

- Vicarious copyright infringement for allowing users to create and sell fake works of well-known artists (essentially impersonating the artists)

- Violation of the statutory and common law rights of publicity related to the ability to request art in the style of a specific artist

Misconceived claims

It is difficult to see how they can succeed, but once cases get to court, aberrant decisions are not exactly rare. For what it’s worth, though, my comments below. (IANAL)

- The argument about stripping images of copyright information seems to be based on an assumption the images are retained. If no version of an image exists without the copyright data, how is it stripped?

- The link between the original data and the images created using the AI seems extremely tenuous and ignores the role of the text prompts, which are in themselves original and subject to copyright protection.

- A style can not be copyrighted. The law does not protect an idea, only the expression of an idea. In prosaic terms, the idea of a vacuum cleaner cannot be copyrighted, but the design of a given machine can be. If a given user creates images in the style of a known artist, that is not, of itself, a breach of copyright. If they attempt to pass off that image as the work of that artist, it is dishonesty on the part of the user, not the AI company. This is no different to any other case of forgery. Suing the AI company is like suing the manufacturer of inks used by a forger.

- If style cannot be protected, how can it be a breach to ask for something in that style?

Misconceived claims

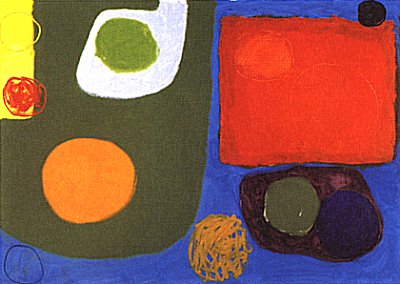

Essentially, the claims seem to be based on the premise that the output is just a mash-up of the training data. They argue that the AI is basically just a giant archive of compressed images from which, when given a text prompt, it “interpolates” or combines the images in its archives to provide its output. The complaint actually uses the term “collage tool” throughout, This sort of claim is, of course, widely expressed on the internet. It rests though, in my view, on a flawed understanding of how these programs really work. For that reason, the claim that the outputs are in direct correlation with the inputs doesn’t stand. For example, see this comparison of the outputs from two different AI using the same input data.

As the IP lawyer linked above suggests:

…it may well be argued that the “use” of any one image from the training data is de minimis and/or not substantial enough to call the output a derivative work of any one image. Of course, none of this even begins to touch on the user’s contribution to the output via the specificity of the text prompt. There is some sense in which it’s true that there is no Stable Diffusion without the training data, but there is equally some sense in which there is no Stable Diffusion without users pouring their own creative energy into its prompts.

In passing, I have never found a match for any image I have generated using these apps on Google eye or Tineye. I haven’t checked every one, only a sample, but enough to suggest the ‘use’ of the original data is, indeed, de minimis, since it can’t actually be identified. Usually I would see lots of other AI generated images. I suspect this says more about originality than any claims to the copying of styles.

I suppose, if an artist consistently uses a specific motif, such as Terence Cuneo’s mouse, it could be argued there was a copyright issue, but even then I can’t see such an argument getting very far. If someone included a mouse in a painting with the specific intent of passing it off as by Cuneo, that is forgery, not breach of copyright.

Pre-AI examples

This situation isn’t unique. Long before AI was anything but science fiction, I saw an image posted on Flickr of a covered bridge somewhere in New England. The artist concerned had taken hundreds, perhaps thousands of photos of the same bridge, a well known landmark, all posted on Flickr, and digitally layered them together. He had not sought prior approval. The final image was a soft, misty concoction only just recognisable as a structure, let alone a bridge. The discussion was fierce, with multiple accusations of theft, threats of legal action etc.

In practice, though, what was the breach? No one could positively identify their original work. Even if an individual image was removed, it seems highly unlikely that there would be any discernible impact on the image. I would argue that the use of images from the internet to ‘train’ the AI is analogous to the artist’s use of the original photos of that bridge. In the absence of any identifiable and proven use of an image, there is no actionable breach.

Who has the rights to the image?

An additional complication, in the UK at least, stems from the fact that unlike many countries, the law makes express provision for copyright protection of computer-generated works. Where a work is “generated by computer in circumstances where there is no human author“, the author of such a work is “the person by whom the arrangements necessary for the creation of the work are undertaken”. Protection lasts for 50 years from the date the work is made.

It could be argued that in the case of AI art packages, the person making the necessary arrangements is the person writing the text prompt. As yet, that hasn’t been tested in a UK court.

See Also

A paper produced by the Tate Legal and Copyright Department. I can give no assurance it is still current.

https://www.tate.org.uk/research/tate-papers/08/digitisation-and-conservation-overview-of-copyright-and-moral-rights-in-uk-law

Is AI use of training data, moral?

Broader issues of morality are also often raised. There are two aspects to this.

Legal Moral Rights

There are moral rights within copyright legislation. Article 6bis of the Berne Convention says:

Independent of the author’s economic rights, and even after the transfer of the said rights, the author shall have the right to claim authorship of the work and to object to any distortion, modification of, or other derogatory action in relation to the said work, which would be prejudicial to the author’s honor or reputation.

If the use of a specific work in an AI generated image cannot be identified or even proven to be there in the first place, it is difficult to believe that its use in that way is ‘prejudicial to the author’s honor or reputation.‘

Broader morality

There is also a broader moral issue. Is it ethical to use someone else’s work, unacknowledged and without remuneration, to create something else. As with all moral argument, that is without a definitive answer. This Instagram account is interesting in that respect.

There is a fine line between taking inspiration and copying. That line is not changed by the existence of AI. Copying of artistic works has a long tradition. As Andrew Marr says in this Observer article, “the history of painting is also the history of copying, both literally and by quotation, of appropriation, of modifying, working jointly and in teams, reworking and borrowing.”

The iconic portrait of Henry VIII is actually a copy. The original, by Hans Holbein, was destroyed by fire in 1698, but is still well known because of the many copies. It is probably one of the most famous portraits of any English or British monarch. Copying of other works has also been a long-standing method of teaching.

Is it acceptable to sell copies of other peoples work?

That of course begs the question of whether AI art is a copy. Setting that aside, it also takes us back to the issue of forgery, or the intent of the copyist. For many years, the Chinese village of Dafen is supposed to have been the source of 60% of all art sold worldwide. Now the artists working there are turning to making original work for the Chinese market. Their huge sales of copies over the decades, suggests that buyers have no objection to buying copies. None of those sales pretended to be anything but.

Giclée a scam?

Many artists sell copies of their own work, via so-called ‘giclée’ (i.e. inkjet) reproductions. The marketing of these reproductions often seems to stray close to the line, with widespread use of empty phrases, even if impressively arty sounding – ‘limited edition fine art print’ and similar. I’ve even seen a reputable gallery offering a monoprint in an edition of 50. There was no explanation, in the description, of how this miraculous work of art was made. It was of course an inkjet reproduction. To be accurate, there was an explanation, but it was on a separate page. There was no link from the sale page.

Ignoring the fact that these are not prints as an artist-printmaker would expect to see them, the language and marketing methods used, are designed to obscure the fact that these are not, of themselves, works of art, but copies of works of art.

In that context, I believe an anonymous painted copy of a Van Gogh to be more honest about what it is, than an inkjet reproduction of an oil painting, by a modern minor artist. It at least has some creative input directly into it, whereas the reproduction is pretty much a mechanical copy. I’ll return to this in Part 3.

Bias in AI art

The possibility of bias in AI in general is a real cause for concern. In the specific case of AI art, the problem may be less immediately obvious, but as AI art is used more widely, the representations it generates will become problematic if they are biased towards particular groups or cultures. One remedy would be to increase transparency about data sources. If the datasets used to train AI models are not representative or diverse enough, the AI output is likely to be biased or even unfair in the representations created.

Issues likely to affect the dataset include:

- A lack of balance in the representation of gender, age, ethnicity and culture

- A lack of awareness of historical bias, which can then become replicated in the AI

- If labels attached to images during preprocessing or dataset creation inaccurately describe the content of images or are influenced by subjective judgments, these biases may be perpetuated in the model.

- Changes to the AI model after deployment may introduce bias if not properly managed and documented.

Lack of transparency may lead to other problems:

- AI systems, often work as “black boxes,” They provide results without explaining how those results were obtained.

- Difficulty in meeting regulatory requirements such as on data sources

- Poor documentation of the data sources and data handling procedures, preprocessing steps, and algorithms used in the AI.

- Inability to demonstrate the existence of clear user consent mechanisms, and adherence to data protection regulations (e.g., GDPR)

These can all lead to poor accountability and lack of trust.

How does this relate to AI?

AI, as it currently stands, does not copy existing works. Nor does it collage together parts of multiple works. Somehow, and I do not pretend to understand the technical process, it manages to generate new images. They may become repetitive. They may, especially the pseudo-photographs, reveal their AI origin, but despite all that they somehow produce work which is not a direct copy – i.e. original.

For the future, much depends on the direction of development. Will these apps move towards autonomy, capable of autonomous generation of images on the basis of a written brief from a client? Or will they move towards becoming a tool for use by artists and designers, supporting and extending work in ‘traditional’ media? They are not mutually exclusive, so in the end the response from the market will be decisive.