This is the last of four posts dealing with AI and AI Art. It takes a different form to the previous three. In this post, I look only at the output from AI Art apps, without regard to how it works or what issues its use might raise. The post concludes with an overall assessment of AI art and my reactions.

In part 3, I briefly described how I tested the app, and mentioned the problems experienced with the output. This post was intended to expand on that, giving example text prompts and the resulting image. However, the theory and the practice have proved very different.

I’m sure I will be returning to the topic. I will try and come here to add new inks.

Testing the AI art app

To recap, my initial aim in testing the AI art app was to push it as far as possible. I was not necessarily trying to generate useable images. The prompts I wrote:

- brought together named people, who could never have met in real life, and put them in unlikely situations.

- were sometimes deliberately vague.

- were written with male and female versions.

- used a variety of ethnicities and ages.

- used single characters, and multiple characters interacting with each other.

- used characters in combination with props and/or animals

- used a range of different settings.

I realised I also needed to test the capacity of the AI to generate an image to a precise brief. This is, I believe, the area where AI art is likely to have the most impact. Doing this proved much harder than I expected.

In essence, generating an attractive image with a single character does not require a complex prompt. I suspect this is already being used by self-publishers on sites like Amazon.

Creating more complex images, at least with Imagine AI, is much more difficult. There are ways around the problem, but these require use of special syntax. This takes the writing of the prompt into a form of coding for which documentation is minimal.

Talking to the AI art app

This problem of human-AI communication is not something I’ve given any real thought to, beyond fiddling with the text prompt. This paper addresses one aspect of it. From this, it became clear that the text prompt used in AI art apps, or the query used by the likes of ChatGPT are not used in their original form. The initial text (in what is termed Natural Language or NL) has to be translated into logical form first. Only then can it be turned into actions by the AI, namely the image generation, although that glosses over a huge area of other complex programming.

This is a continuously evolving area of research. As things stand, the models used have difficulty in capturing the meaning of prompt modifiers. This mirrors my own difficulties. The paper is part of the effort to allow the use of Natural Language without the need to employ special syntax or terms.

Research into HCI

The research, described in this paper, points towards six different types of prompt modifiers use in the text-to-image art community. These are:

- subject terms,

- image prompts,

- style modifiers,

- quality boosters,

- repeating terms, and

- magic terms.

This taxonomy reflects the community’s practical experience of prompt modifiers.

The paper’s author made extensive use of what he calls ‘grey literature’. Grey literature is materials and research produced by organizations outside of the traditional commercial or academic publishing and distribution channels. In the case of AI art, much is available from the companies developing the apps. This from Stable Diffusion and this, deal with prompt writing.

Both of them take a similar approach to preparing the text prompt. They suggest organising the content into categories, which could be mapped onto the list of prompt modifiers referred to above.

The text-to-image community

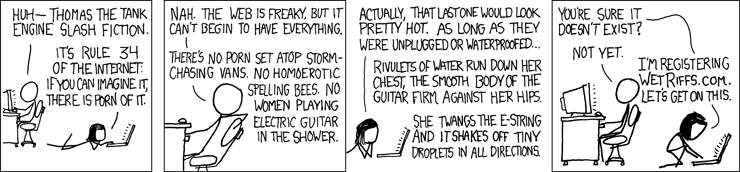

As with any sphere of interest, there seems to be a strong online community. Given the nature of this particular activity, they post their images on social media. Some of this, being tactful, is best described as ‘specialist’. Actual porn is generally locked down by the AI companies. That doesn’t stop people pushing the boundaries, of course. If you decide to explore the online world of AI art, expect lots of anime in the shape of big chested young women in flimsy clothing. From the few images I’ve seen which made it past the barrier, the files used for training the app must have included NSFW (Not Safe For Work) images. What we get is not quite Rule 34, but skates close…

https://imgs.xkcd.com/comics/rule_34.png

What else?

It’s not all improbable girls, though. The Discord server for the Imagine AI art app has a wide range of channels. These include nature, animals, architecture, food, and fashion design as well as the usual SF, horror etc. The range of work posted is quite remarkable in isolation, but in the end quite samey. Posters tend not to share the prompt alongside the image. It isn’t clear therefore if this is a shortcoming in the AI, or a reflection of the comparatively narrow interests of those using the app.

Judging by the public response to AI, it seems unlikely that many artists in other media are using it with serious intent. That too will bias users to a particular mind set. Reading between the lines of the posts on Discord, my guess is that they tend to be young and male. Again, this limited user base will affect the nature of images made.

The output from the AI app

The problems I described above have prevented me from the sort of systematic evaluation, I planned. A step by step description of the process isn’t practical. It takes too long. The highest quality model on Imagine is restricted to 100 image generations in a day, for example. I hit that barrier while testing one prompt, still without succeeding.

In addition, I did a lot of this work before I decided to write about it, so only have broad details of the prompts I. I posted many of those images on Instagram in an account I created specifically for this purpose.

https://www.instagram.com/ianbertram_ai_

Generic Prompts

I began with some generic situations, adding variations as shown in brackets at the end of each prompt. In some cases, I inserted named people into the scenario. An example:

- A figure walking down a street (M/F and various ages, physique, ethnicities, hair style/colour, style of dress)

Capturing a likeness

I wanted to see how well the app caught the likeness of well known people. By putting them in impossible, or at least unlikely situations, this would push the app even further. An example:

- Marilyn Monroe dancing the tango with Patrick Stewart. I also tried Humphrey Bogart, Donald Trump and Winston Churchill.

I discovered a way to blend the likenesses of two people. This enables me to create a composite which can be carried through into several images. Without that, the AI would generate a new face each time. The numbers in the example are the respective weights given to the two people in making the image. If one is much better known than the other, the results may not be predictable, but should still be consistent:

- (Person A: 0.4) (Person B: 0.6) sitting at a cafe table.

Practical applications

I also wanted to test the possibility of using the app for illustrations such as book covers, magazine graphics etc. Examples:

- Man in his 50s with close-cropped black hair and a beard, wearing a yellow three-piece suit, standing at a crowded bar

- Woman in her 50s with dark hair, cut in a bob, wearing a green sweater, sitting alone at a table in a bar.

- Building, inspired by nautilus shell, art nouveau, Gaudi, Mucha, Sagrada Familia

To really push things, I wrote prompts drawn from texts intended for other purposes. Examples:

- Lyrics to Dylan’s Visions of Johanna

- Extracts from the Mars Trilogy by Kim Stanley Robinson

- T S Eliot’s The Waste Land

I tried using random phrases, drawn from the news and whatever else was around, and finally random lists of unrelated words.

Worked example

This post would become too long if I included examples of everything from the list above, which is already shortened. Instead, I will show examples from a single prompt and some of those as I develop it. The prompt is designed to create the base image for a book cover. The story relates to three young people who become emotionally entangled as a consequence of an SF event. (A novel I’m currently writing)

Initial prompt:

Young man in his 20s, white, cropped brown hair, young woman, in her 20s, mixed race, afro, young woman in her 20s, white, curly red hair

This didn’t work, the output never showed three characters, often only one. If I wasn’t trying to get a specific image, they would be fine as generic illustrations.

Shifting away from photo realism, this one might have been nice, ethnicities apart, but for one significant flaw…

Next version

In order to get three characters, I obviously needed to be more precise. So I held off on the physical details in an attempt to get the basic composition right. After lots of fiddling and tweaking, I ended up with this

(((Young white man))), 26, facing towards the camera, standing behind (((two young women))), both about 24, facing each other

The brackets are a way to add priority to those elements with strength from 1 (*) to 3 (((*))).

The image I got, wasn’t perfect, but workable and certainly the closest so far.

Refining the prompt

My next step was refining the appearance, which proved equally problematic

(((young white man))), cropped brown hair, in his 20s, facing towards the camera, standing behind (((a young black woman))), ((afro hair)), in her 20s, facing (((a young white woman, curly red hair))), (((the two women are holding hands)))

I got nowhere with this. I usually got images where the man was standing between two black girls. In one a girl was wearing a bikini for some reason. In another she was wearing strange trousers, with one leg shorts, the other full length. I also got one with the composition I wanted, but with three women.

More attempts and tweaks failed. The closest to a usable image was this, using what is called by the app a pop art style. I eventually gave up. If there is a way to generate an image with three distinct figures in it, I have yet to find it.

Gallery

This section is simply a slideshow of other images generated by the AI in testing. They are in no particular order, but show some of the possibilities, in terms of image quality. If only the image could be better matched to the prompt…

Consumer interest

I relaunched my Etsy shop, to test the market, so far without success. I haven’t put a lot of effort into this, so probably not a fair test. References to sales from the shop are to the previous version. At the time of writing, no AI output has sold. This is the URL:

THIS SHOP IS NOW CLOSED

I also noticed on Etsy, and in adverts on social media, what looks like a developing market in prompts, with offerings of up to 100 in a variety of genre. These are usually offered at a very low price. The differing syntax used by the different apps may be an issue, but I haven’t bought anything to check. I saw, too, a number offering prompts to generate NSFW images. I’m not sure how they bypass the AI companies restrictions. Imagine, at least, seems to vet both the prompt and the output.

Overall Conclusions

It’s art, Jim, but not as we know it

In Part 3, I asked ‘Is AI Art, Art?’ It’s clear that many of those in the AI art community, consider the answer to be yes. They even raise similar concerns to ‘traditional’ artists about posting on social media, the risk of theft etc. The more I look, the more I think they have a point. The art is not I believe in the image alone, but in the entire process. It is not digital painting, it is, in effect, a new art form.

Making the images, getting them to a satisfactory state, is a sort of negotiation with the AI. It requires skill and creative talent. It requires more than simple language fluency, but an analytic approach to the language which allows the components of the description to be disaggregated in a specific way. Making AI art also requires an artistic eye to evaluate and assess the images generated and to evaluate what is needed to refine that image, both in terms of the prompt and the image itself.

The State of the art

As things stand, AI art is far from being a click and go product. Paradoxically, it is that imperfection which triggers the creativity. It means users develop an addictive, game-like mind set, puzzling away at finding just the right form of words. In Part 3, I referred to Wittgenstein and his definition of games. This seemed a way into looking at the many forms taken by art. A later definition, by Bernard Suits, is “the voluntary attempt to overcome unnecessary obstacles.” This could be applied to poetry, for example, with poetic forms like the sonnet.

Writing the prompt is very similar, it needs to fit a prescribed format, with specific patterns of syntax. In this post, I wrote about breaking the creative block by working within self-imposed, arbitrary rules. The imperfect text-to-image system, as it currently stands, is, in effect, the unnecessary obstacle that triggers creative effort.

The future

It seems inevitable that the problems of Human-AI communication will be resolved. AIs will then be able to understand natural language. I don’t know if we will ever get a fully autonomous AI art program. It certainly wouldn’t be high on my To-Do list. We don’t need it. A better AI, able to understand natural language and generate art, without the effort it currently takes, would be a mixed blessing. It would, however minimally, offer an opportunity for creativity to people who, for whatever reason, don’t believe themselves to be creative. On the other hand, too many jobs and occupations have already had the creativity stripped from the by automation and computerisation. Stronger AIs are going to accelerate that process.

It’s easy to say, ‘new jobs will be created’, but those jobs usually go to a different set of people. Development of better, but still weak, AIs will be disruptive. With genuine strong AI, all bets are off. We cannot predict what will happen. It is possible that so many jobs will be engineered away by strong AI that we will be grateful for the entertainment value, alone, of deliberately weak AI art apps and games.